Edge Computing Consulting

P&C Global's Edge Computing Consulting Services

For C-suite leaders making edge computing decisions today, the calculus has shifted from a connectivity question to a capital-allocation question, and edge computing consulting now has to answer both. CIOs are increasingly expected to justify whether each edge node materially improves latency, resilience, or operational performance relative to its infrastructure cost. CFOs now evaluate cloud-egress fees, AI-inference workloads, and distributed infrastructure spending alongside traditional facilities and technology investments, while chief security officers monitor the expanding OT-cyber surface created by connected fleets and distributed environments. Executive leadership no longer views a reference architecture as a sufficient outcome. The C-suite expects a sequenced rollout with clear ownership, KPI baselines, and a workload-placement strategy capable of sustaining operational and financial performance through deployment and scale.

P&C Global’s edge computing consultants approach the discipline as an enterprise operating program rather than a standalone infrastructure initiative. Engagements begin with a use-case diagnostic that identifies where latency requirements, data-sovereignty constraints, operational resilience, or inference economics justify distributed compute architectures — whether within mature operating environments or greenfield deployments. The work progresses through six integrated decisions — diagnose, define, model, sequence, govern, measure — each aligned to measurable baselines leadership commits to sustaining over time. The result is sustained uptime, deterministic latency, operational resilience, and infrastructure-efficiency performance supported by governance structures and operating cadence designed to scale with the enterprise.

Edge Computing Challenges Facing Senior Operators

Organizations typically engage an edge computing consultancy when operational complexity begins outpacing centralized infrastructure models. In most cases, the primary challenge is not the architecture itself, but the operating model required to sustain distributed environments at scale. Capex pressure tightens just as deployment scope expands; service-level expectations have already moved past what centralized compute can deliver; site footprints are heterogeneous enough to defeat a templated rollout; distributed operations introduce reliability risks the central network operations center has never absorbed; telemetry across the fleet is patchy enough to disguise drift; and security, patching, and compliance obligations sprawl faster than the operating team can cover them. These recurring pressures — CapEx constraint, real-time capacity gap, heterogeneous footprints, distributed-operations risk, telemetry blind spots, and security sprawl — explain why edge programs stall before scale.

CapEx & Network Cost Constraining Deployment

Capital pressure and network-cost volatility create immediate strain on edge deployment economics. Hardware-refresh cycles compete directly with cloud-egress costs, bandwidth volatility affects long-term network contracts, and expansion initiatives often draw investment away from maintaining existing environments. Without a workload-placement model tied directly to latency requirements, inference economics, and operational value, leadership teams frequently struggle to distinguish strategic edge investments from discretionary infrastructure spending.

Real-Time Capacity Gap Outpacing Centralized Compute

Real-time service expectations outpacing centralized compute capacity is the gap leadership underestimates: expectations move with the customer benchmark while central infrastructure moves with the planning cycle. Without edge computing consulting services tied to a published technology roadmap, the rollout funds workloads that do not earn the spend.

Heterogeneous Site Footprints Complicating Rollouts

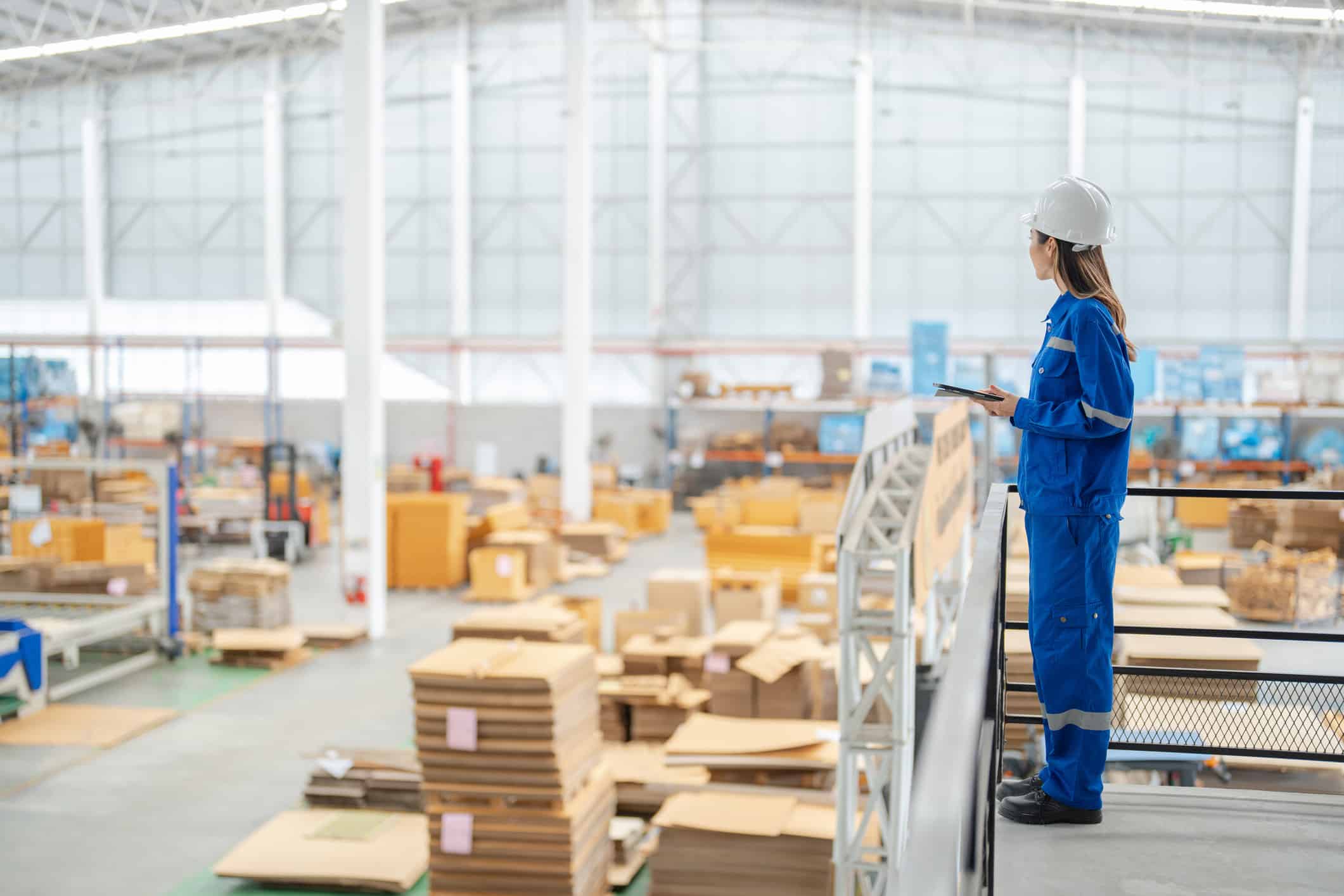

Infrastructure heterogeneity becomes highly visible once edge programs move beyond pilot deployments. Manufacturing environments, retail operations, field depots, transportation hubs, and customer-premise devices all operate within different connectivity, power, and environmental constraints. As a result, standardized rollout models frequently fail when deployment conditions change across sites. Without a reference architecture with profiles per footprint, every rollout wave restarts the integration debate.

Distributed Operations Risk Threatening Reliability

Distributed operations and remote maintenance risk threatening reliability shows up when one field engineer becomes the constraint on uptime, and the risk is amplified when supply chain optimization depends on edge-node data. Without a remote-operations model with clear escalation and automated patching, every site issue becomes an executive escalation.

Telemetry & Connectivity Gaps Weakening Edge Visibility

Telemetry, observability, and connectivity gaps weakening edge visibility leave the program managing what the dashboard shows, not what the fleet is doing. Connectivity drops disguise themselves as software faults, software faults disguise themselves as data-quality issues, and the operating review argues over interpretation. Without node-level, workload-level, and network-level observability integrated into operating reviews, performance degradation and operational drift remain hidden within aggregate reporting metrics.

Security & Patching Sprawl Tightening Across Edge Fleet

Security, patching, and compliance sprawl tightening across edge fleet is the governance pressure that compounds every other challenge. Connected nodes multiply the OT-cyber surface, regulatory obligations vary by jurisdiction, and patch-management at edge scale defeats the central security team. Sustainable edge deployment requires a clearly defined security, patching, and governance operating model capable of supporting resilience, compliance, and operational continuity across geographically distributed environments.

Our Approach to Edge Computing Consulting

P&C Global’s edge computing consultants follow a six-stage approach that aligns strategic planning with operational execution, with each step tied directly to a leadership decision and measurable baseline. The sequence is deliberate: assess use-case suitability before defining topology and reference architecture; establish the architecture before modeling workload placement against latency, resilience, and cost requirements; finalize placement strategy before sequencing deployment; then activate the security, governance, and measurement systems that guide execution through rollout and scale. Each stage produces executive-level decision artifacts alongside KPIs field and infrastructure teams carry into subsequent operating cycles.

Edge Use-Case Diagnostic & Workload Suitability Baseline

The engagement begins with an edge use-case diagnostic and workload suitability baseline that identifies which workloads justify distributed placement and where latency, bandwidth, sovereignty, or inference economics support edge deployment. The assessment applies equally to existing infrastructure environments and greenfield deployments. When product architecture or application design emerges as the primary constraint, parallel digital product initiatives are frequently advanced to align platform strategy with infrastructure modernization.

Edge Strategy, Topology Strategy & Reference Architecture

Once the diagnostic is complete, the team refines the edge strategy, deployment topology, and reference architecture into an operating framework infrastructure and operations teams can execute at scale. Vendor evaluation and architecture analysis determine which deployment models — far edge, near edge, regional, or hybrid — best align to operational requirements and long-term economics. The result is a reference architecture and workload-placement framework leadership can sustain through deployment and operational governance.

Workload Placement Modeling Roadmap & Sequencing

When the strategy is designed into a roadmap, the edge computing consulting firm completes workload-placement analysis and infrastructure cost modeling across edge and cloud environments. Workloads are evaluated against latency sensitivity, data-volume requirements, sovereignty obligations, resilience expectations, and inference economics to determine optimal placement strategy. These efforts are frequently coordinated with robotics initiatives when distributed control loops, automation environments, or real-time operational systems depend directly on edge performance.

Edge Deployment Roadmap & Site Rollout

While capabilities are readied, the team locks the edge deployment roadmap and site rollout against geographies, footprint profiles, and network enviroments. Network activation, partner coordination, onboarding waves, and deployment sequencing are aligned to connectivity readiness, hardware availability, and jurisdictional data requirements. Designed for resilience and adaptability, the rollout framework accommodates evolving operating conditions without disrupting deployment sequence or infrastructure readiness.

Edge Security, Operating Model Operating Model & Governance

During execution, governance is rebuilt around an integrated edge operating model spanning security, patch management, fleet governance, and operational accountability. Decision rights across IT, OT, infrastructure, and cybersecurity teams are formally documented to reduce operational ambiguity and improve coordination across distributed environments. This governance framework also establishes the operational discipline required for operational excellence initiatives to scale consistently across field operations and remote infrastructure environments.

Latency, Reliability & Edge Outcome Refinement

As outcomes begin to materialize, the edge program is evaluated against the metrics leadership prioritizes most: deterministic latency, uptime reliability, operational resilience, and sustained infrastructure efficiency. Workload-level latency, fleet uptime, incident-resolution time, inference economics, and connectivity-stability indicators feed directly into executive operating reviews so deployment priorities and workload placement can evolve alongside changing operational demands.

Outcomes Clients Can Expect

- Lower inference and connectivity costs with stronger capex efficiency on edge nodes across the fleet.

- New latency-enabled product features and tighter customer-facing service levels on real-time workflows.

- Higher field and plant-floor productivity as edge-enabled workflows reduce the dependency on centralized compute.

- Stable uptime, deterministic latency, and faster incident-resolution time at edge sites under operational load.

- Stronger data-sovereignty, OT-cyber, and connectivity-resilience posture as governance is built into the operating model.

Why Edge Computing Matters Now

AI-inference economics has accelerated the urgency of edge computing investment, and the resulting operational pressures are now firmly on the executive agenda. As AI workloads scale, organizations are increasingly deploying edge architectures to control cloud-egress costs, reduce inference expense, and improve operational responsiveness — making edge strategy as much a financial decision as an infrastructure one. At the same time, evolving data-sovereignty requirements across the EU, India, China, and other jurisdictions are pushing distributed architectures from optional capability to operational necessity. Together, those forces are why edge computing consulting services are now graded against the same operating discipline as core infrastructure programs — not as a side experiment, but as a CapEx-line capability with auditable economics.

Industrial operations, automation environments, and real-time service models also require deterministic latency and localized processing that centralized infrastructure cannot consistently deliver. As a result, today’s C-suite evaluates edge computing consulting not by connectivity claims alone, but by its ability to improve resilience, align with sovereignty requirements, control infrastructure economics, and sustain operational performance at scale.

Operationalize Edge Computing with P&C Global

C-suite leaders advancing edge computing consulting bring P&C Global in to design and run the program where strategy meets execution — not after the slide deck has shipped, with operator-led teams staying through to sustained uptime, latency, and unit-cost outcomes.

Frequently Asked Questions — Edge Computing Advisory

P&C Global approaches edge computing advisory as an operator-led execution discipline focused on measurable operational performance rather than infrastructure deployment alone. Our teams work directly with executive leadership to align workload placement, distributed operations, OT governance, infrastructure economics, cybersecurity, and operational resilience within a unified execution model designed to sustain value beyond initial rollout. Rather than treating edge computing as a standalone architecture or integration initiative, P&C Global integrates diagnostic assessment, topology strategy, workload modeling, rollout governance, fleet operations, and continuous performance optimization into a coordinated enterprise operating program. The result is an edge capability designed not only to improve latency and connectivity performance, but also to strengthen uptime, control infrastructure costs, align with sovereignty requirements, and reduce operational and cybersecurity risk as distributed environments scale.

Field-engineering compensation, executive scorecards, and partner economics are the levers that determine whether an edge program lands or quietly reverts. The edge computing consulting engagement reviews the existing scorecards and partner contracts against the new topology and operating model, recommends adjustments to mix and accelerators that match the rollout phases, and works with finance, IT, OT, and security leadership on the change. Stage six measurement is wired so the operating review surfaces incentive-driven behavior — site-team patching cadence, escalation patterns, partner SLAs — early enough to correct.

P&C Global, an edge computing consulting firm, tailors scope to the client’s situation. A short-form diagnostic that produces the use-case baseline, placement-economics picture, and prioritized rollout list is shorter than a multi-quarter implementation program that runs the rollout, governs the operating cadence, and stays through the first measurement cycle; both are scoped to the KPI baseline the client wants to defend. The work is matched to the decision the executive team is making — whether that decision is sequencing the rollout, modeling placement economics, or wiring security and observability into the operating review — not selected from a fixed menu.

Modern edge computing consulting motions touch personal, operational, and clinical data through node-level telemetry, identity systems, and OT-control surfaces, so the architecture has to align with NIST CSF, ISO 27001, GDPR (data residency), HIPAA, and IEC 62443 for OT without strangling the deployment cadence. The engagement maps the data flows, designs residency, retention, and patch-management rules into the architecture, and works with the client’s security, OT, and privacy teams on the controls that follow. P&C Global itself maintains ISO 27001 and SOC 2 certifications, so compliance is a discipline the firm lives by, not just designs for clients. Outputs are framed as designing client systems to align with the standards — not as certifying compliance, which sits with the client’s own controls.

The cold-chain case documents a logistics operator whose distributed temperature and route telemetry had outgrown centralized analysis; P&C Global wired AI-driven precision into the edge fleet so reliability and waste-reduction metrics held against a defined operating cadence. That work is published as a documented cold-chain logistics outcome. The research note advances the thesis that proximity is becoming the decisive variable in data-center development, with commercial real estate (CRE) operators trading raw scale for power-and-latency adjacency, and is published as a research note on CRE’s edge in data-center development. Read together, the two pieces illustrate the move from edge thesis to measured outcome — and the governance discipline that decides which side of that line a program lands on.

New edge computing engagements typically begin with a structured working session involving a named C-suite sponsor — often the CIO or COO — and the IT, OT, and security leaders who will carry the rollout; the diagnostic frame, KPI baseline, and decision calendar are agreed before any architecture work starts. P&C Global brings adjacent capabilities in parallel rather than sequentially: digital-product roadmap revisions where edge unlocks new features, supply-chain redesign that depends on the telemetry the fleet now delivers, and the operational-excellence work the field operating model has to absorb. The cross-silo model means the same accountable team carries through into those workstreams rather than handing off to a new firm. C-suite leaders advancing edge programs can contact P&C Global to schedule the working session.

Success Stories

A dynamic showcase of P&C Global’s transformative engagements and the latest industry trends.

Demonstrated Outcomes. Significant Influence.

Witness the remarkable achievements we’ve enabled for ambitious clients.

Setting a New Standard in Aviation Training & Public Engagement